ScrapeStorm

ScrapeStorm is an AI-powered visual web scraper that auto-identifies lists, tables, and pagination. Supports Windows, Mac, Linux; exports to Excel, MySQL, Google Sheets, and more.

Summary

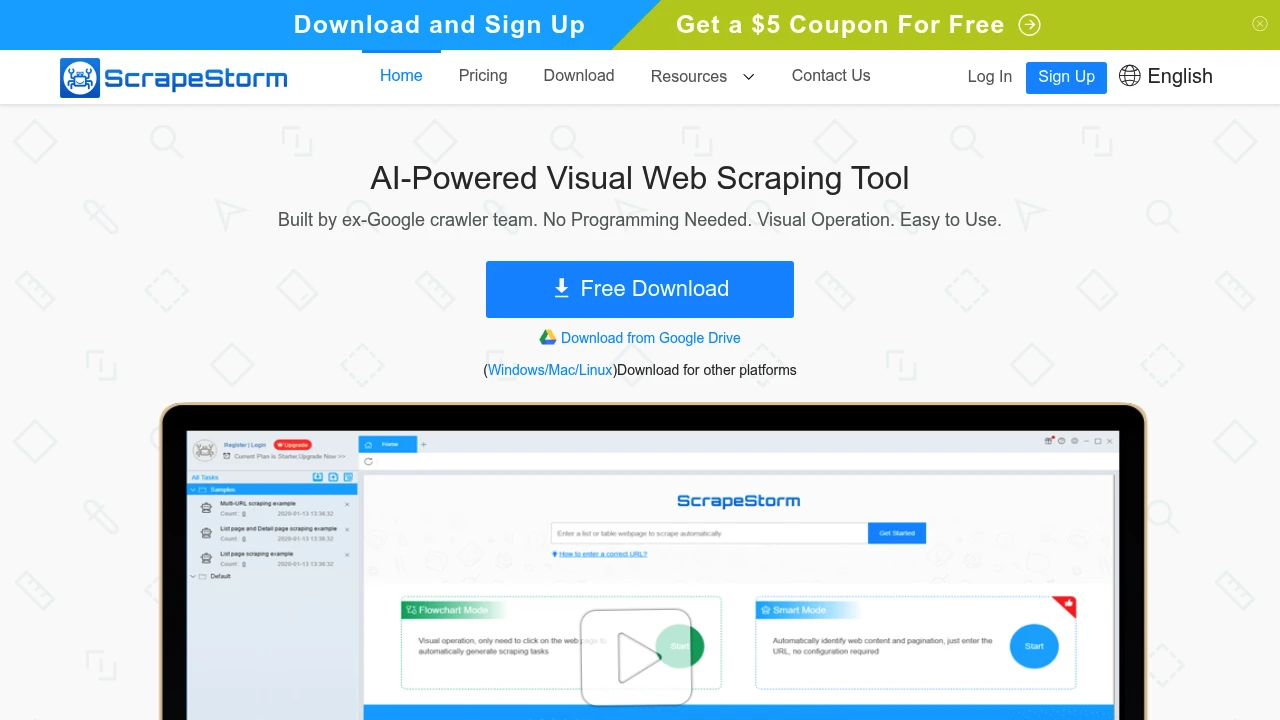

ScrapeStorm is an AI-driven web scraping tool built by ex-Google crawler engineers, enabling visual point-and-click data extraction from any website without writing code.

What is ScrapeStorm?

ScrapeStorm is a web data extraction tool powered by artificial intelligence algorithms that automatically identifies list data, tabular data, and pagination buttons. Users simply enter URLs or click webpage elements following software prompts to generate complex scraping rules. All tasks auto-save to the cloud and support cross-platform login.

Core Capabilities

- AI intelligent identification: Auto-detects lists, tables, links, images, prices, phone numbers, emails, and more

- Visual operation: Click, input text, scroll, dropdown, wait for loading, loop, conditional evaluation

- Multi-format export: Excel, CSV, TXT, HTML, MySQL, MongoDB, SQL Server, PostgreSQL, WordPress, Google Sheets

- Enterprise features: Scheduling, IP rotation, auto export, file download, speed boost engine, group start/export, Webhook, RESTful API, SKU scraper

- Cloud account: Tasks auto-sync to cloud servers, accessible from any computer

- Cross-platform: Windows, Mac, Linux support with identical feature sets

Pros

- AI auto-detects data structures, drastically reducing manual configuration

- Visual click interface mirrors natural web browsing behavior

- All major operating systems supported with seamless platform switching

- Cloud sync eliminates device dependency

- Enterprise-grade scraping services and API integration available

Cons

- Free tier has limited features and scraping quotas

- Complex dynamic sites may require manual rule adjustments

- Learning curve exists for first-time users

- Advanced features (IP rotation, API) require paid plans

Decision Guidance

Use when: You need regular extraction of large-scale structured data from e-commerce, news, or social media sites, especially if you lack programming skills but require automated data collection.

Consider alternatives: If you need highly customized crawler logic or have an in-house dev team, open-source frameworks like Scrapy or Puppeteer may fit better. For one-off small-scale extraction, browser extensions may be more economical.